RAIE: Region-Aware Incremental Preference Editing with LoRA for LLM-based Recommendation

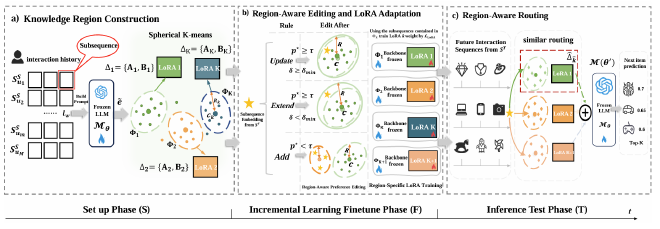

We study the problem of user preference drift in LLM-based recommendation and propose RAIE, a region-aware incremental editing framework. Instead of global updates or instance-level edits, RAIE introduces preference regions as structured units for localized adaptation. This design enables efficient continual learning while preserving stable preferences.

Main Contributions #

- Region-based Preference Modeling: Introduces preference regions as semantically coherent clusters of user behaviors.

- Region-aware Incremental Editing: Designs three editing operations (Update / Expand / Add) to model different drift patterns.

- Region-specific LoRA Adaptation: Assigns each region a dedicated LoRA adapter for localized parameter updates.

Method #

1. Overview #

RAIE decomposes preference adaptation into three stages:

(1) knowledge region construction, (2) region-aware editing, and (3) routing-based inference.

2. Knowledge Region Construction #

User interaction sequences are segmented into subsequences and encoded by a frozen LLM. These representations are clustered via spherical k-means to form preference regions:

- each region has a center ( c_k ) and radius ( R_k )

- each region corresponds to a specific interest pattern

3. Region-Aware Editing and Adaptation #

For each incoming subsequence, RAIE:

- computes similarity to existing regions

- selects an editing operation based on confidence

- Update: refine region center (small drift)

- Expand: enlarge region boundary (moderate drift)

- Add: create a new region (emerging preference)

Each region is associated with a dedicated LoRA adapter, trained only on its regional data.

4. Inference Routing #

At inference time:

- map sequence → region

- activate the corresponding LoRA adapter

This enables dynamic, context-aware adaptation without modifying the backbone model.

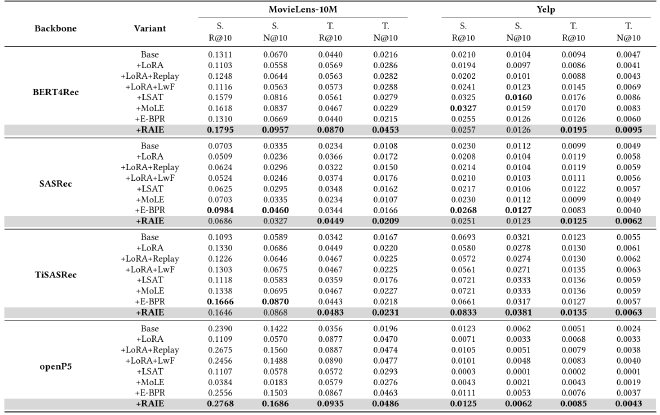

Experimental Setup #

- Datasets: MovieLens-10M, Yelp

- Backbones: BERT4Rec, SASRec, TiSASRec, OpenP5

- Baselines: LoRA, Replay, LwF, LSAT, MoLE, E-BPR

- Metrics: Recall@10, NDCG@10

Representative Findings #

- Superior Adaptation: RAIE achieves the best performance on future data (Test split).

- Strong Retention: Maintains competitive performance on historical data (Set-up split).

- Balanced Learning: Effectively resolves the stability–plasticity trade-off.

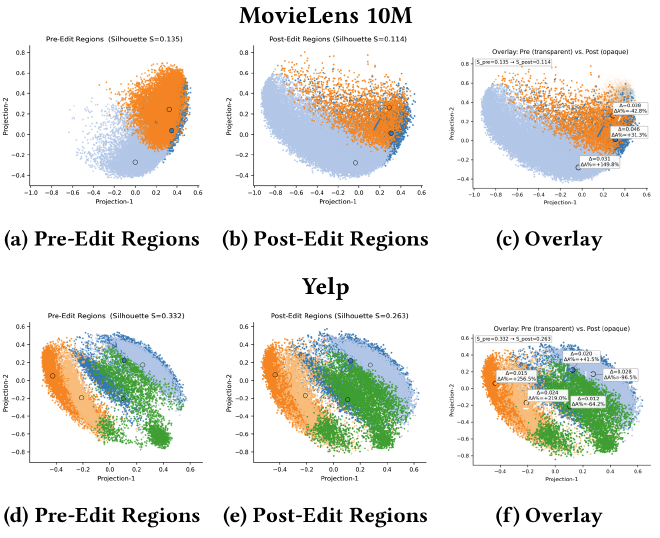

- Interpretable Adaptation: Visualization shows that RAIE preserves global region structure while performing localized adjustments, leading to controlled and interpretable preference updates.

Citation #

WWW 2026

Jin Zeng, Yupeng Qi, Hui Li, Chengming Li, Ziyu Lyu, Lixin Cui, Lu Bai. 2026. RAIE: Region-Aware Incremental Preference Editing with LoRA for LLM-based Recommendation. arXiv preprint arXiv:2603.00638.

BibTeX

@article{zeng2026raie,

title={RAIE: Region-Aware Incremental Preference Editing with LoRA for LLM-based Recommendation},

author={Zeng, Jin and Qi, Yupeng and Li, Hui and Li, Chengming and Lyu, Ziyu and Cui, Lixin and Bai, Lu},

journal={arXiv preprint arXiv:2603.00638},

year={2026}

}